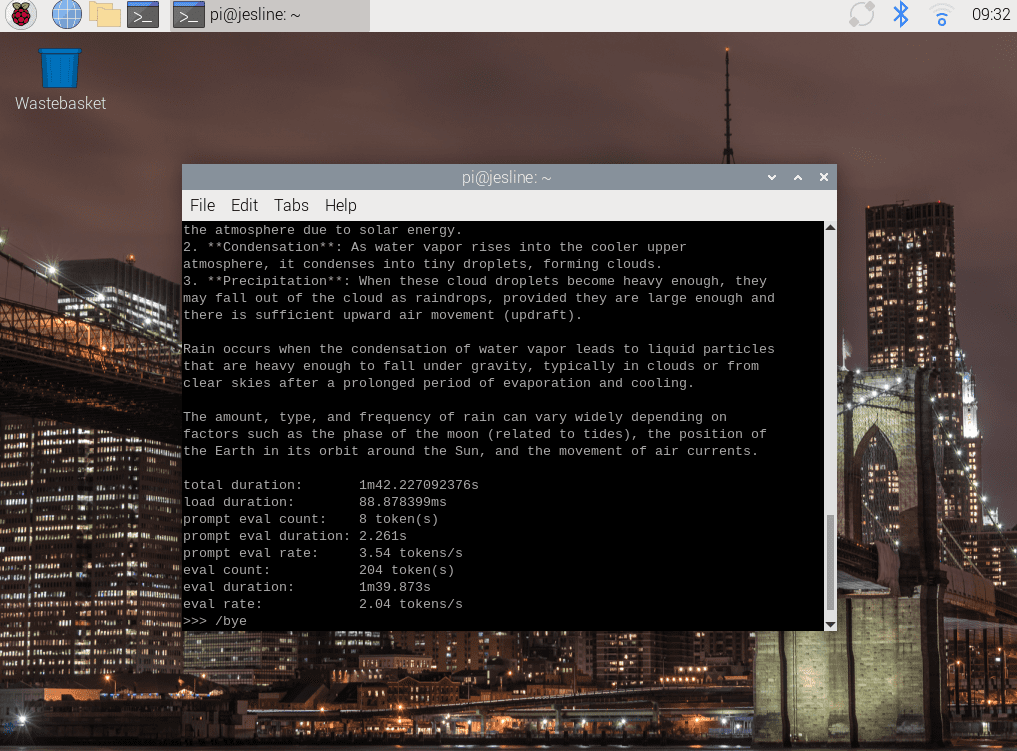

Proof That Deepseek Chatgpt Actually Works

페이지 정보

본문

This technique ensures that the ultimate training information retains the strengths of DeepSeek-R1 while producing responses which might be concise and efficient. Big Story: Are you lonely? Qwen and DeepSeek are two consultant mannequin series with strong help for both Chinese and English. For those who ask Alibaba’s primary LLM (Qwen), what happened in Beijing on June 4, 1989, it will not current any information in regards to the Tiananmen Square massacre. So we will probably move them to the border," he mentioned. • We'll consistently examine and refine our mannequin architectures, aiming to further improve each the training and inference effectivity, striving to strategy environment friendly help for infinite context size. Specifically, while the R1-generated information demonstrates strong accuracy, it suffers from points equivalent to overthinking, poor formatting, and excessive length. Our experiments reveal an interesting commerce-off: the distillation leads to raised efficiency but also considerably increases the average response length. 1) Compared with DeepSeek-V2-Base, because of the improvements in our model architecture, the scale-up of the model measurement and training tokens, and the enhancement of data quality, DeepSeek-V3-Base achieves considerably better performance as expected. Either it has better things to do, or it doesn’t.

This technique ensures that the ultimate training information retains the strengths of DeepSeek-R1 while producing responses which might be concise and efficient. Big Story: Are you lonely? Qwen and DeepSeek are two consultant mannequin series with strong help for both Chinese and English. For those who ask Alibaba’s primary LLM (Qwen), what happened in Beijing on June 4, 1989, it will not current any information in regards to the Tiananmen Square massacre. So we will probably move them to the border," he mentioned. • We'll consistently examine and refine our mannequin architectures, aiming to further improve each the training and inference effectivity, striving to strategy environment friendly help for infinite context size. Specifically, while the R1-generated information demonstrates strong accuracy, it suffers from points equivalent to overthinking, poor formatting, and excessive length. Our experiments reveal an interesting commerce-off: the distillation leads to raised efficiency but also considerably increases the average response length. 1) Compared with DeepSeek-V2-Base, because of the improvements in our model architecture, the scale-up of the model measurement and training tokens, and the enhancement of data quality, DeepSeek-V3-Base achieves considerably better performance as expected. Either it has better things to do, or it doesn’t.

These strikes highlight the massive influence of open-supply licensing: Once a model’s weights are public, it doesn’t matter in the event that they originated in Beijing or Boston. AI search company Perplexity, for instance, has introduced its addition of DeepSeek’s models to its platform, and advised its customers that their DeepSeek open source fashions are "completely unbiased of China" and they're hosted in servers in knowledge-centers within the U.S. More lately, Google and other instruments are now providing AI generated, contextual responses to look prompts as the top results of a query. By providing access to its sturdy capabilities, DeepSeek-V3 can drive innovation and enchancment in areas akin to software program engineering and algorithm improvement, empowering developers and researchers to push the boundaries of what open-supply fashions can achieve in coding duties. This underscores the robust capabilities of DeepSeek-V3, particularly in dealing with advanced prompts, including coding and debugging duties. In the coding area, DeepSeek-V2.5 retains the powerful code capabilities of DeepSeek-Coder-V2-0724.

These strikes highlight the massive influence of open-supply licensing: Once a model’s weights are public, it doesn’t matter in the event that they originated in Beijing or Boston. AI search company Perplexity, for instance, has introduced its addition of DeepSeek’s models to its platform, and advised its customers that their DeepSeek open source fashions are "completely unbiased of China" and they're hosted in servers in knowledge-centers within the U.S. More lately, Google and other instruments are now providing AI generated, contextual responses to look prompts as the top results of a query. By providing access to its sturdy capabilities, DeepSeek-V3 can drive innovation and enchancment in areas akin to software program engineering and algorithm improvement, empowering developers and researchers to push the boundaries of what open-supply fashions can achieve in coding duties. This underscores the robust capabilities of DeepSeek-V3, particularly in dealing with advanced prompts, including coding and debugging duties. In the coding area, DeepSeek-V2.5 retains the powerful code capabilities of DeepSeek-Coder-V2-0724.

DeepSeek-AI (2024a) DeepSeek-AI. Deepseek-coder-v2: Breaking the barrier of closed-supply fashions in code intelligence. Evaluating large language fashions educated on code. In this paper, we introduce DeepSeek-V3, a big MoE language model with 671B total parameters and 37B activated parameters, skilled on 14.8T tokens. 2) Compared with Qwen2.5 72B Base, the state-of-the-artwork Chinese open-source mannequin, with solely half of the activated parameters, DeepSeek-V3-Base additionally demonstrates exceptional benefits, especially on English, multilingual, code, and math benchmarks. In algorithmic tasks, DeepSeek-V3 demonstrates superior efficiency, outperforming all baselines on benchmarks like HumanEval-Mul and LiveCodeBench. Plugins can provide real-time info retrieval, news aggregation, document searching, image technology, knowledge acquisition from platforms like Bilibili and Steam, and interaction with third-occasion services. DeepSeek claims in a company research paper that its V3 mannequin, which could be in comparison with a typical chatbot mannequin like Claude, cost $5.6 million to train, a number that is circulated (and disputed) as your complete development value of the mannequin. DeepSeek is an AI development firm based mostly in Hangzhou, China. The event of DeepSeek has heightened his issues. Australia raises concerns concerning the expertise - so is it secure to use? The largest win is that DeepSeek is cheaper to make use of as an API and usually quicker than o1.

DeepSeek-AI (2024b) DeepSeek-AI. Deepseek LLM: scaling open-source language models with longtermism. Deepseekmoe: Towards ultimate expert specialization in mixture-of-consultants language fashions. For the second challenge, we also design and implement an efficient inference framework with redundant professional deployment, as described in Section 3.4, to overcome it. This skilled model serves as a knowledge generator for the final mannequin. The rollout of DeepSeek’s R1 model and subsequent media consideration "make DeepSeek an attractive target for opportunistic attackers and those looking for to know or exploit AI system vulnerabilities," Kowski mentioned. Design approach: DeepSeek’s MoE design allows process-particular processing, doubtlessly improving efficiency in specialised areas. This extremely environment friendly design permits optimal performance while minimizing computational useful resource utilization. The effectiveness demonstrated in these particular areas signifies that lengthy-CoT distillation could possibly be priceless for enhancing mannequin performance in other cognitive duties requiring complex reasoning. DeepSeek-V3 takes a extra modern method with its FP8 combined precision framework, which uses 8-bit floating-level representations for particular computations. For questions that can be validated utilizing specific guidelines, we adopt a rule-primarily based reward system to determine the feedback. To be particular, in our experiments with 1B MoE fashions, the validation losses are: 2.258 (using a sequence-clever auxiliary loss), 2.253 (utilizing the auxiliary-loss-Free Deepseek Online chat methodology), and 2.253 (utilizing a batch-smart auxiliary loss).

- 이전글3 Common Reasons Why Your Power Tools Isn't Working (And The Best Ways To Fix It) 25.02.28

- 다음글See What Website Gotogel Alternatif Tricks The Celebs Are Using 25.02.28

댓글목록

등록된 댓글이 없습니다.